The Curious Case of the JSON BOM

Recently, I was testing some interop with Azure Service Bus, which has a rather useful feature when used with Azure Functions in that you can directly bind JSON to an custom type, to do something like:

[FunctionName("SaySomething")]

public static void Run([ServiceBusTrigger("Endpoints.SaySomething", Connection = "SbConnection")]SaySomething command, ILogger log)

{

log.LogInformation($"Incoming message: {command.Message}");

}

As long as we have valid JSON, everything should "just work". However, when I sent a JSON message to receive, I got a...rather unhelpful message:

[4/4/2019 2:50:35 PM] System.Private.CoreLib: Exception while executing function: SaySomething. Microsoft.Azure.WebJobs.Host: Exception binding parameter 'command'. Microsoft.Azure.WebJobs.ServiceBus: Binding parameters to complex objects (such as 'SaySomething') uses Json.NET serialization.

1. Bind the parameter type as 'string' instead of 'SaySomething' to get the raw values and avoid JSON deserialization, or

2. Change the queue payload to be valid json. The JSON parser failed: Unexpected character encountered while parsing value: ?. Path '', line 0, position 0.

Not too great! So what's going on here? Where did that parsed value come from? Why is the path/line/position tell us the beginning of the stream? The answer leads us down a path of encoding, RFC standards, and the .NET source code.

Diagnosing the problem

Looking at the error message, it appears we can try to do exactly what it asks and bind to a string and just deserialize manually:

[FunctionName("SaySomething")]

public static void Run([ServiceBusTrigger("Endpoints.SaySomething", Connection = "SbConnection")]string message, ILogger log)

{

var command = JsonConvert.DeserializeObject<SaySomething>(message);

log.LogInformation($"Incoming message: {command.Message}");

}

Deserializing the message like this actually works, we can run with no problems! But still strange - why did it work with a string, but not automatically binding from the wire message?

On the wire, the message payload is simply a byte array. So something could be happening reading the bytes into a string - which can be a bit "lossy" depending on the encoding used. To fully understand what's going on, we need to understand how the text was encoded to see how it should be decoded. Clearly though the decoding of our string "fixes" the problem, but I don't see it as a viable solution.

To dig further, let's drop down our messaging binding to its lowest form to get the raw bytes:

[FunctionName("SaySomething")]

public static void Run([ServiceBusTrigger("Endpoints.SaySomething", Connection = "SbConnection")]byte[] message, ILogger log)

{

var value = Encoding.UTF8.GetString(message);

var command = JsonConvert.DeserializeObject<SaySomething>(message);

log.LogInformation($"Incoming message: {command.Message}");

}

Going this route, we get our original exception:

[4/4/2019 3:58:38 PM] System.Private.CoreLib: Exception while executing function: SaySomething. Newtonsoft.Json: Unexpected character encountered while parsing value: ?. Path '', line 0, position 0.

Something is clearly different between getting the string value through the Azure Functions/ServiceBus trigger binding, and going through Encoding.UTF8. To see what's different, let's look at that value:

{"Message":"Hello World"}

That looks fine! However, let's grab the raw bytes from the stream:

EFBBBF7B224D657373616765223A2248656C6C6F20576F726C64227D

And put that in a decoder:

{"Message":"Hello World"}

Well there's your problem! A bunch of junk characters at the beginning of the string. Where did those come from? A quick search of those characters reveals the culprit: our wire format included the UTF8 Byte Order Mark of 0xEF,0xBB,0xBF. Whoops!

JSON BOM'd

Having the UTF-8 BOM in our wire message messed some things up for us, but why should that matter? It turns out in the JSON RFC spec, having the BOM in our string is forbidden (emphasis mine):

JSON text exchanged between systems that are not part of a closed

ecosystem MUST be encoded using UTF-8 [RFC3629].

Previous specifications of JSON have not required the use of UTF-8

when transmitting JSON text. However, the vast majority of JSON-

based software implementations have chosen to use the UTF-8 encoding,

to the extent that it is the only encoding that achieves

interoperability.

Implementations MUST NOT add a byte order mark (U+FEFF) to the

beginning of a networked-transmitted JSON text. In the interests of

interoperability, implementations that parse JSON texts MAY ignore

the presence of a byte order mark rather than treating it as an

error.

.

Now that we've identified our culprit, why did our code sometimes succeed and sometimes fail? It turns out that we do really need to care about the encoding of our messages, and even when we think we pick sensible defaults, this may not be the case.

Looking at the documentation for the Encoding.UTF8 property, we see that the Encoding objects have two important toggles:

- Should it emit the UTF-8 BOM identifier?

- Should it throw for invalid bytes?

We'll get to that second one here in a second, but something we can see from the documentation and the code is that Encoding.UTF8 says "yes" for the first question and "no" for the second. However, if you use Encoding.Default, it's different! It will be "no" for the first question and "no" for the second.

Herein lies our problem - the JSON spec says that the the encoded bytes must not include the BOM, but may ignore a BOM. Between "does" and "does not", our implementation went on the "does not" side of "may".

We can't really affect the decoding of bytes to string or bytes to object in Azure Functions (or it's rather annoying to), but perhaps we can fix the problem in the first place - JSON originally encoded with a BOM.

When debugging, I noticed that Encoding.UTF8.GetBytes() did not return any BOM, but clearly I'm getting one here. So what's going on? It gets even muddier when we start to introduce streams.

Crossing streams

Typically, when dealing with I/O, you're dealing with a Stream. And typically again, if you're writing a stream, you're dealing with a StreamWriter whose default behavior is UTF-8 encoding without a BOM. The comments are interesting here, as it says:

// The high level goal is to be tolerant of encoding errors when we read and very strict

// when we write. Hence, default StreamWriter encoding will throw on encoding error.

So StreamWriter is "no-BOM, throw on error" but StreamReader is Encoding.UTF8, which is "yes for BOM, no for throwing error". Each option is opposite the other!

If we're using a vanilla StreamWriter, we still shouldn't have a BOM. Ah, but we aren't! I was using NServiceBus to generate the message (I'm lazy that way) and its Newtonsoft.Json serializer to generate the message bytes. Looking underneath the covers, we see the default reader and writer explicitly pass in Encoding.UTF8 for both reading and writing. This is very likely not what we want for writing, since the default behavior of Encoding.UTF8 is to include a BOM.

The quick fix is to swap out the encoding with something that's a better default here in our NServiceBus setup configuration:

var serialization = endpointConfiguration.UseSerialization<NewtonsoftSerializer>();

serialization.WriterCreator(s =>

{

var streamWriter = new StreamWriter(s, new UTF8Encoding(false));

return new JsonTextWriter(streamWriter);

});

We have a number of options here, such as just using the default StreamWriter but in my case I'd rather be very explicit about what options I want to use.

The longer fix is a pull request to patch this behavior so that the default writer will not emit the BOM (but will need a bit of testing since technically this changes the wire format).

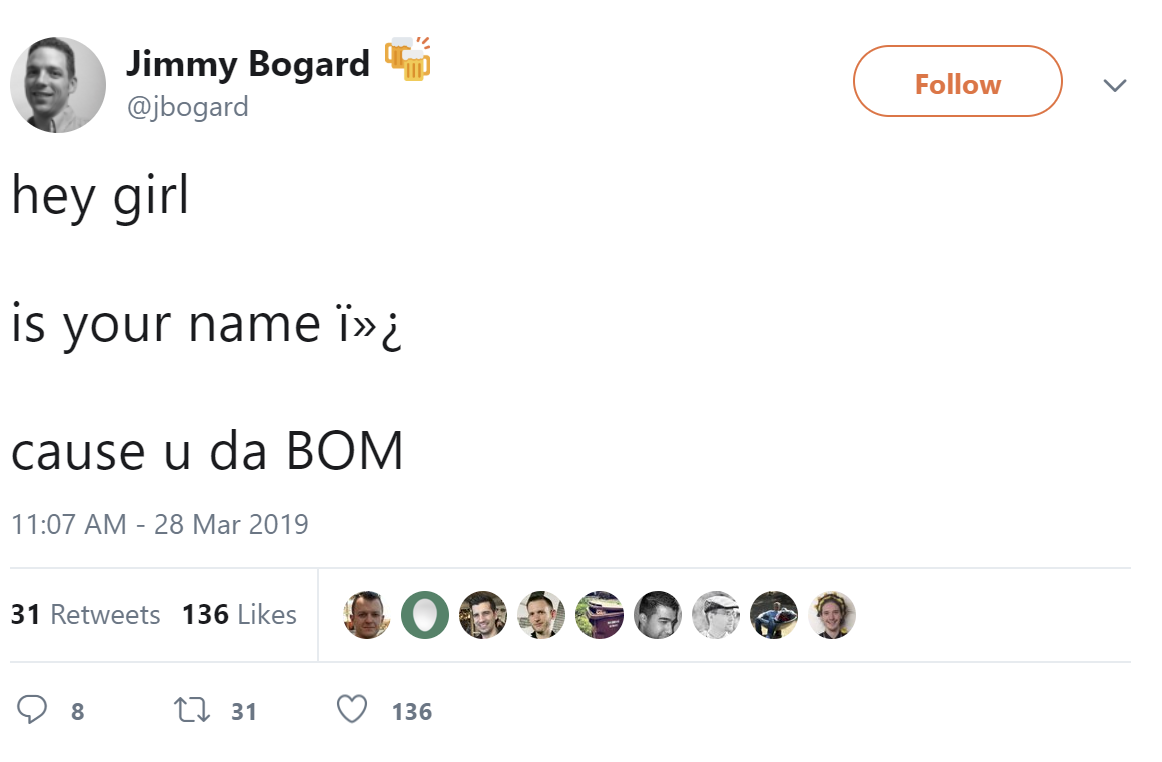

So the moral of the story - if you see weird characters like  showing up in your text, enjoy a couple of days digging in to character encoding and making really bad jokes.